About cluster computing¶

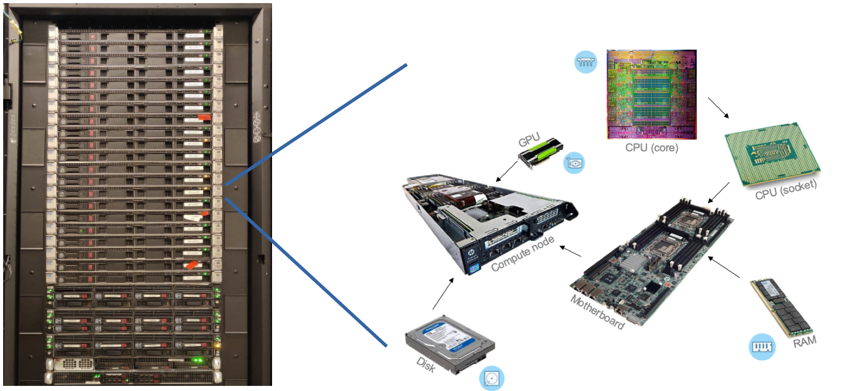

The CISM manage several compute clusters. A cluster is a made of computers (nodes) that are interconnected and appear to the user as one large machine. The cluster is accessed through a login node (or frontend node) where users can manage their jobs and data in their home directory. Each compute node has a certain number of processors, a certain amount of memory (RAM) and some local storage (scratch space). Each processor comprises several independent computing units (cores), and possibly several accelerators (GPUs). In a hardware context, a CPU is often understood as a processor die, which you can buy from a vendor and fits into a socket on the motherboard, while in a software context, a CPU is often understood as one compute unit, a.k.a. a core. All the resources are managed by a Resource Manager/Job Scheduler to which users must submit batch jobs. The jobs enter a queue, and are allocated resources based on priorities and policies. The resource allocation is exclusive, meaning that when a job starts, it has exclusive access to the resources it requested at submission time.

The software installed on the clusters to manage resources is called Slurm ; an introductory tutorial can be found here.

The CISM operates two clusters: Lemaitre and Manneback. Lemaitre was named in reference of Georges Lemaitre (1894–1966), a Belgian priest, astronomer and professor of physics at UCLouvain. He is seen by many as the father of the Big Bang Theory but also he is the one who brought the first supercomputer to our University.

Manneback was named after Charles Manneback (1894-1975), Professor of Physics at UCLouvain. Close friend to Georges Lemaitre, he was the lead of the FNRS-IRSIA project to build the first supercomputer in Belgium in the 50’s.

You can see Manneback (left) and Lemaitre (right) in the picture below, picture that was taken during a visit of Cornelius Lanczos (middle) at Collège des Prémontrés in 1959 (click on the image for more context).

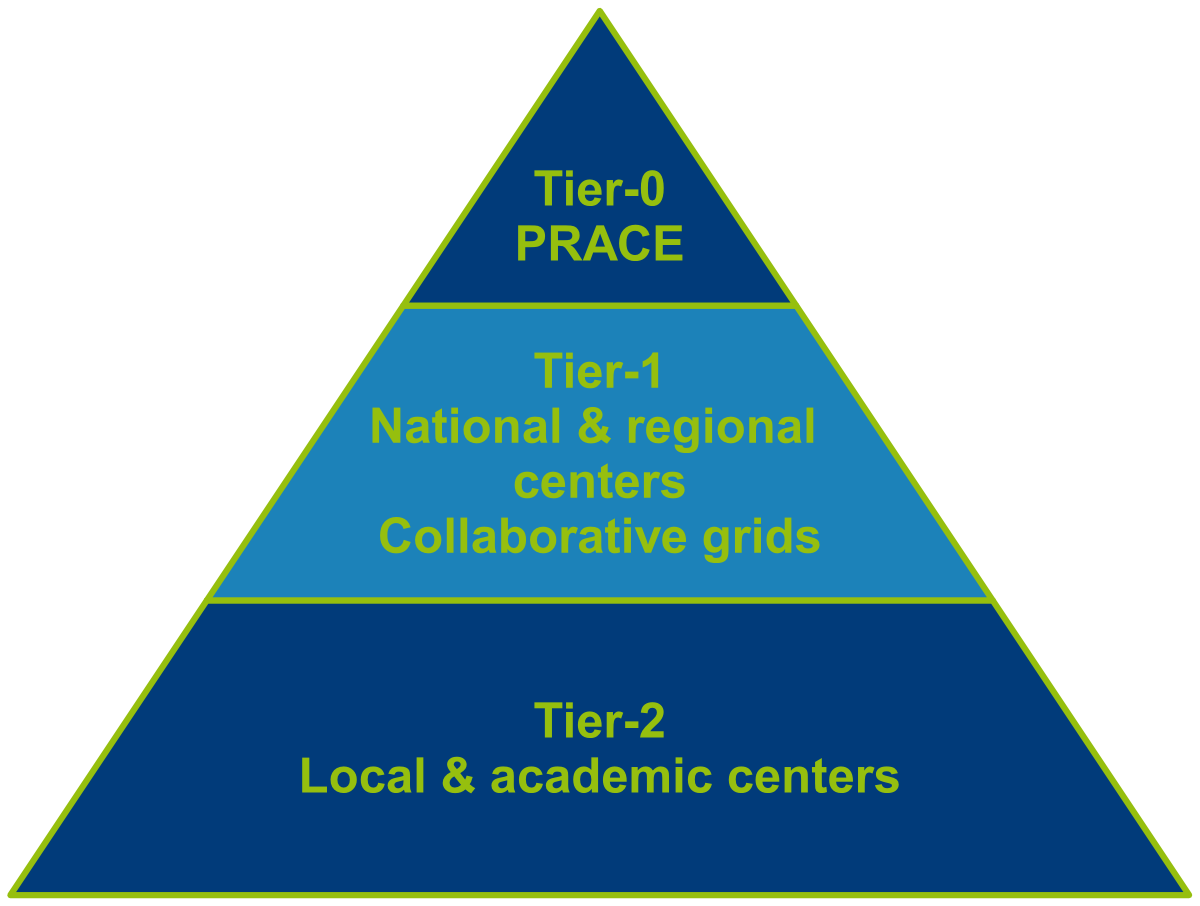

Besides Lemaitre and Manneback, and thanks to various collaborations (CÉCI, EuroHPC, Cenaero and PRACE), CISM users can access more clusters, of multiple sizes. Clusters are organised according to their size into three categories:

The general rule is that access to a higher-up category Tier-N is conditioned on being able to demonstrate proper abilities in the Tier-(N+1) category.

List of clusters¶

| Cluster name | Funding | Size | Hosting institution | More information |

|---|---|---|---|---|

| Manneback | CISM users | Tier-2 | UCLouvain | This page |

| Lemaitre4 | CÉCI | Tier-2 | UCLouvain | https://www.ceci-hpc.be |

| Lyra | CÉCI | Tier-2 | ULB | https://www.ceci-hpc.be |

| NIC5 | CÉCI | Tier-2 | ULiège | https://www.ceci-hpc.be |

| Hercules | CÉCI | Tier-2 | UNamur | https://www.ceci-hpc.be |

| Dragon2 | CÉCI | Tier-2 | UMons | https://www.ceci-hpc.be |

| Lucia | Walloon Region | Tier-1 | Cenaero (Gosselies) | https://tier1.cenaero.be/fr/LuciaInfrastructure |

| Lumi | EuroHPC | Tier-0 | CSC (Finland) | https://docs.lumi-supercomputer.eu/hardware/ |

Access & conditions¶

- access to Tier-2 clusters is granted automatically to every member of the University, a CÉCI account must be requested;

- access to Tier-1 clusters requires the submission of a Tier-1 project and a valid CÉCI account ;

- access to Tier-0 clusters requires responding to call for EuroHPC proposals and a valid UCLouvain portal account ;

Note that while usage of the clusters is free, users are encouraged to contribute by requesting computing CPU.hours in their scientific project submissions to funding agencies and users are requested to tag their publications that were made possible thanks to the CISM/CÉCI infrastructure.

About the cost¶

Access to the computing facilities is free of charge. Usage of the equipment for fundamental research is free since 2017 for most researchers with a normal usage.

Note

Although access is free, the acquisition model of the hardware for Manneback is solely based on funding brought by users.

However, we encourage users who foresee a large use of the equipment for a significant duration to contact us and include budget for additional equipment in their project funding requests. When equipment is acquired thanks to funds brought by a specific group, the equipment is shared with all users but the funding entity can obtain exclusive reservation periods on the equipment or request specific configurations.

If the expected usage does not justify buying new equipment, but the project’s budget include computation time, the CISM can also bill the usage of the equipment for the duration of the project. Rates vary based on the funding agency (European, Federal, Regional, etc.) and the objective of the research (fundamental, applied, commercial, etc.)

Before 2017, if a research group (pôle de recherche) usage exceeded 200.000 hCPU (CPU hours), equivalent to the usage of 23 processors during a full year, the cost was computed as a function of the yearly consumption as follows:

Rate applied in 2016 related to you research group consumption in 2015:

- Below 200.000 hCPU: 0 €/hCPU

- Between 200.000 and 2.500.000 hCPU: 0.00114 €/hCPU

- Over 2.500.000 hCPU: 0.00065 €/hCPU

For 2017 and beyond, thanks to a participation by the SGSI in the budget of the CISM, the cost will be null for the users who do not have specific funding for computational resources. Users funded by a Regional, Federal, European or Commercial project with specific needs should contact the CISM team for a quote.